Semi-Supervised Approach for Transformers

Transformers models have become the go-to model for NLP tasks. In this article, we will go through the end-to-end process of training highly robust NLP models using Transformers architecture, following a top-down approach. We always try to use a semi-supervised approach to train an NLP model be it classification or generation.

Majorly, we will be discussing fine-tuning BERT, and Text Classification. Let’s start by getting an overall picture and then get into details.

For data augmentation with Text, read this artice.

Understanding the Semi-Supervised Transformers Architecture

Training a semi-supervised model requires 2 steps:

- Train the unsupervised part first. That is training the language model. For transformers, it can be fine-tuning BERT, GPT, etc. For this part of training, we only need unlabeled data or sentences. This step adjusts the weights of the model so that it can understand the context of the data we want to train it on. Context can be fashion, news, sports, etc. For example, the word “sleeping” can have a different context when we are trying to train a model for “fashion” v/s a model for “news”.

- Train the supervised part second. That is train the model with the labeled data. If the first part had a huge amount of data, the supervised part will have high accuracy even with a low amount of data, but vice versa is not true.

The below diagram is a GIF of semi-supervised training. First, we fine-tune BERT, then we train a Text Classifier or a Named Entity Recognition model. It gives a visual idea of the linkage between fine-tuning language model and then using the fine-tuned model for classification.

Let’s go over all the components of the above diagram:

Embeddings Layer:

The main job of the embedding layer is to convert the textual input data to a model understandable format. It has three main components:

- word embeddings: This is to get the matrix representation of each word. For BERT this has a vocabulary size of 30,522 and an array of 768 values represents a word. So, if the input is of 4 words, the output of word embeddings will be 4 x 768.

- position embeddings: This is used to get the position of the word. In transformers, the position of the word is passed as input as the context of the sentence is highly dependent on the position of the word in the sentence.

- linear layer: This layer is a linear convolution layer, to merge the layers and finally provide a matrix with 768 features.

Encoder Layers:

There are a total of 11 BERT Layers in this encode layer.

Each layer has 2 main components:

- Self Attention Layer: This is the core of the Transformers model that helps in determining the importance of each word w.r.t all the other words in the sentence and in the overall context of the sentence. The diagram below shows an embedding being used as the key (K), query (Q), and value (V). K, Q, and V go through a couple of layers of convolution, dot-product, and softmax to get the desired output. Self-Attention in itself is a topic that requires much more explanation. If you are not aware of self-attention I suggest you watch this youtube video for a better understanding.

This layer takes an input of 768 features, does a multi-head attention operation, and outputs the matrix with 768 features.

- Convolution Layers: These layers are followed by the self-attention layer. It includes a couple of dense layers, layer normalization, dropout, and GELU Activation functions. The number of input and output features for these layers is 768.

Context Vectors:

Not by the definition, but the output of the above 2 layers, embeddings and encodings layers, gives out a matrix with 768 features which is the context of the sentence, therefore we will refer to this as the context vector. This is the most important part of the model, as all the training done in the unsupervised part was to train the weights to get context vectors correctly.

In models such as RNN and LSTM, the input to the model is word embeddings which are unable to capture the context of the sentence. So we train a neural network on top of the word embeddings layer to capture the context of the sentence. Now, this output captures the context with 768 features. This output can be passed as input to classification or generation models making the overall model highly robust and accurate.

Language Models:

This is an unsupervised part of training. Additional convolutional layers are added to the output of the context vectors. The final output of this model is the matrix with vocabulary size i.e. 30,522. This model needs to predict a word from the existing vocabulary. We feed millions of unlabeled sentences and allow the model to adjust the weights to get appropriate context vectors.

There are two ways to train a language model:

Masked Language Model:

We mask 10–30% of the input sentence and ask the model to predict the masked words. As you can see in the below diagram the input sentence is “women floral printed [MASK] top”.

Convolution layers are added to the encoder layer to predict the missing word.

The output of the model is the matrix with vocab size (30,522 in this case) to predict the missing word. Based on the model’s prediction loss is computed and the weights of the models are adjusted.

After training a BERT language model using Masked Language Model technique for the fashion domain with 20 million sentences, you can clearly observe the difference in the below GIF.

Input: women [MASK] top

BERT (Fashion): women floral printed crop top

BERT (Original): women who on were top

Casual Language Models:

With CLM, the model tries to predict the next word from the existing vocabulary and compares it with the true output. Generally, CLM is used for generative models i.e. GPT architectures. We are not covering GPT-based architectures in this article, but you can have a look at the GIF below showing the difference between a fashion-specific GPT2 model v/s the GPT2 model with original weights.

Once BERT/GPT is fine-tuned with the custom dataset, the second part is to train the classification and generation models over it.

Although while training the weights of the entire model gets updated, for our understanding, we can assume that the context vector is now the input to the models.

Text Classification

It is a simple classification problem now. In the below diagram, dense layers have been added to the output of context vectors, and finally reduced the features to the number of classes. The better the context layer output, the more robust and accurate the model will be.

When we are dealing with Transformers, we can also get the word-level confidence scores that contributed to the prediction of the said class.

For the sentence pantaloons green shirt with jacket, wear it with black jeans, we are trying to predict a fashion category. The above sentence has lots of categories in it including shirts, jackets, and jeans. But as you can see in the first example it is correctly predicting shirts, with the word shirt having the highest confidence. In other examples, we have changed the ground truth to different labels and then tried to observe the behavior. With changing labels, the word level confidence of the sentence changes, and it automatically points to the words that are contributing positively to the prediction of the new labels. For e.g. in the second example when we change the True Label to jeans, the confidence of the word jeans becomes positive and other words such as shirt and jacket become negative. This is the beauty of the self-attention models.

Named Entity Recognition

Same as text classification, we assume the context vector as input and write the layers for the NER task. The NER model predicts labels for each input word.

Since the data is fashion specific, the labels are granular. NER also helps is phrase discovery, which in turn leads to trends discovery. There are lots of methods for NER, but the scope of this article is to understand the semi-supervised approach.

Now that we have gone through all the components, I suggest, you look again at the Transformers end-to-end training GIF.

The code for training is available at Hugging face.

- Fine Tune Langauge Model: Colab Notebook

- Train a Text Classification Model: Colab Notebook

- Train a NER Model: Colab Notebook

In this article, I have tried to give an idea of the semi-supervised training that we can do with transformers to train highly accurate models. Let me know your thoughts on it.

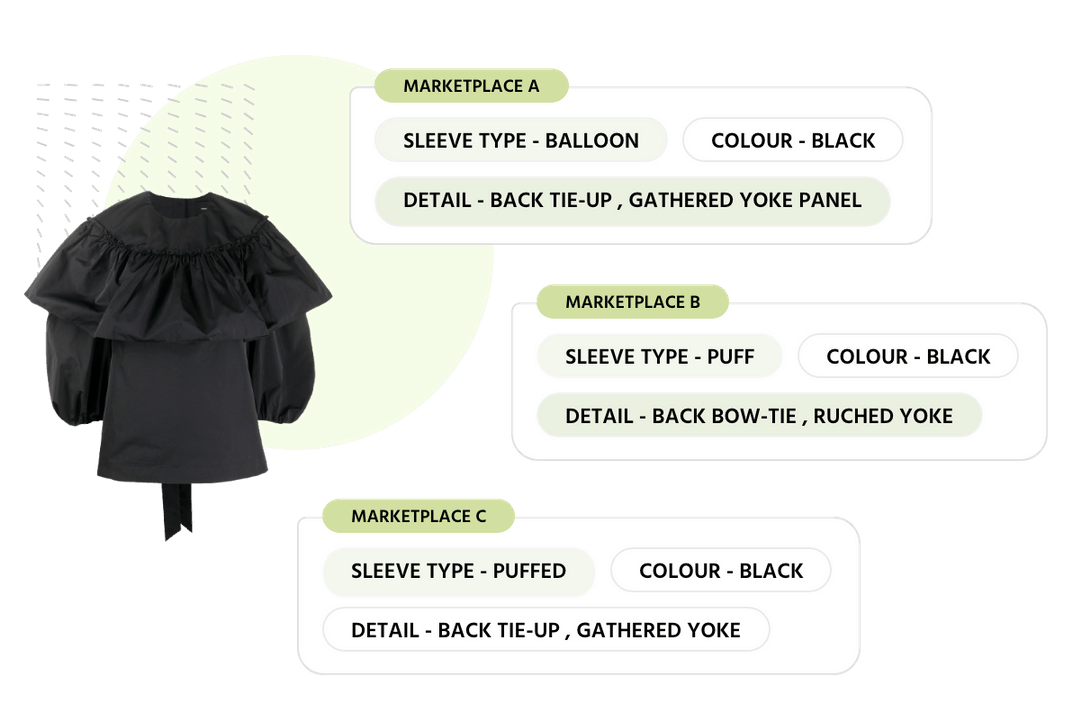

Importance of Product Taxonomy: Role of AI in Automating & Improving Taxonomies