Event-Driven Scalability in Data Processing Pipeline

Building a data processing pipeline is one of the most common problem statements, for which you would have written small scripts or built a full-fledged scalable system based on the amount, and frequency of data. In this article, we will talk about the idea of event-driven scalability, the backbone that will be cost-optimized, and requires a minimum amount of development and operations.

Why build an event-driven and scalable data processing pipeline?

While working with startups or building a team project or a personal project, that requires a pipeline for data processing, there is always a constraint of cost. There are 2 ways to tackle this:

- Write a script or build a small-scale system at the start of the project and re-work building a large-scale system when requirements increase.

- Build an event-driven and scalable system, which means when not in use, zero cost, when in use the cost will be proportionate to the usage, and even when there is a high scale requirement, it will easily be handled.

Problem Statement Examples:

- Data creation and pre-processing pipeline for training a Deep Learning model.

- Extraction of metadata from the incoming raw data.

- Crawling of data and extracting metadata. etc.

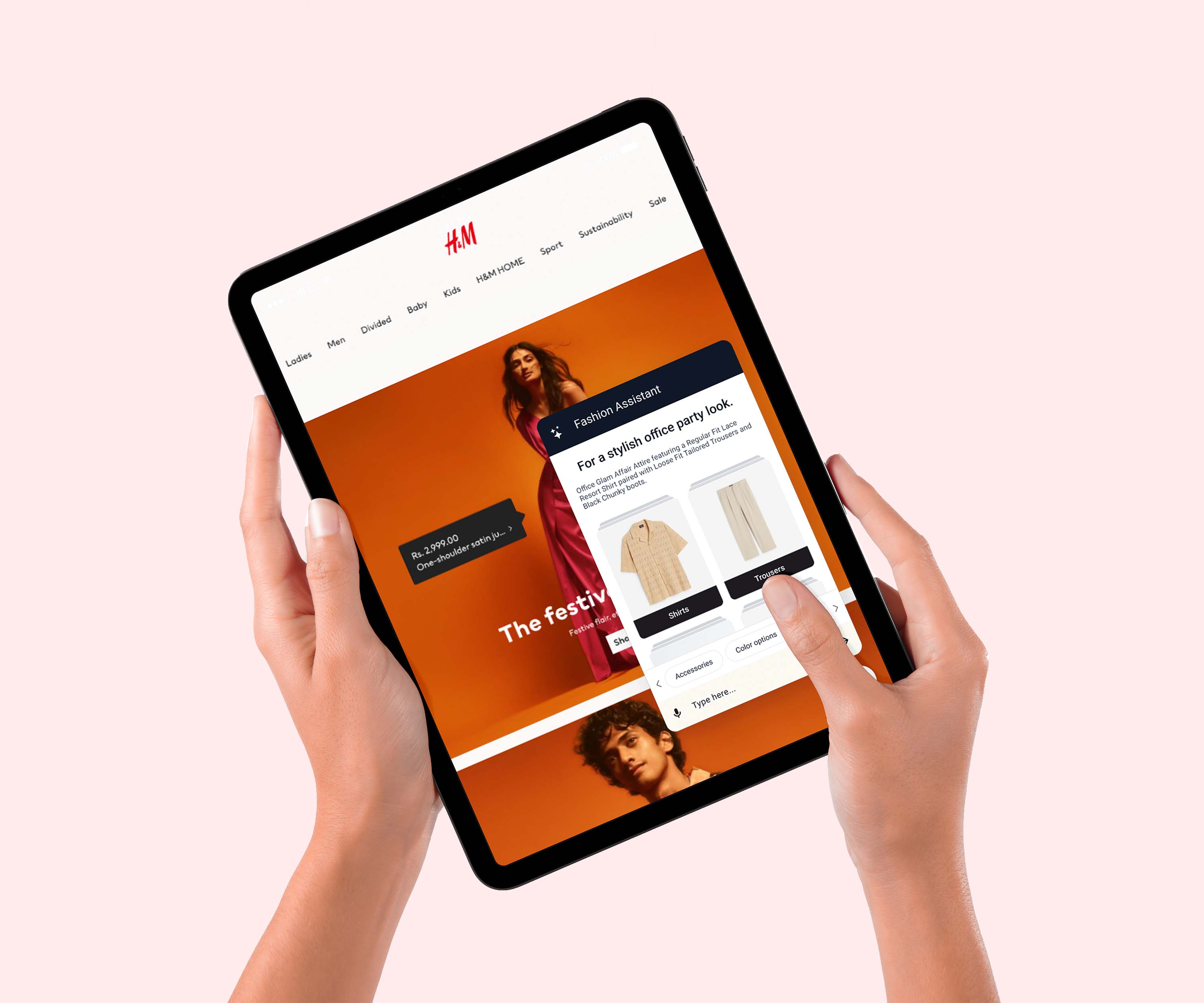

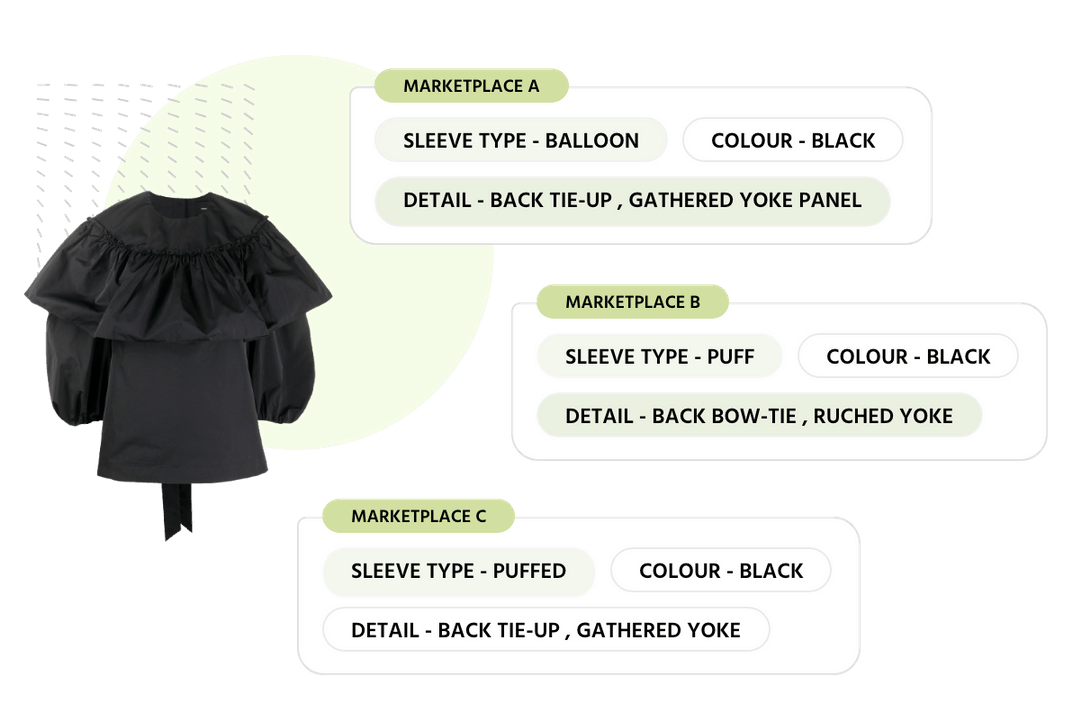

We will go with a simple example for building a metadata extraction system for e-commerce products. The metadata then is used to provide e-commerce solutions offered by Streamoid. The problem statement is that the client will provide their data. Metadata needs to be extracted, in order for internal services to work. Just for easy understanding, the internal systems need standard data to function, while the data received from clients is raw and may not adhere to the standards. So this calls for a data processing pipeline to convert the raw data to standard metadata that is understood well by the internal systems.

This metadata will be used by various systems across the organization to build several products such as (similar and outfitter recommendations), provide marketplace equivalent data (Amazon, Myntra, Tatacliq, etc.), train multiple classifiers, understand trends and behavior.

Let’s see an example of the extraction of metadata for an e-commerce product:

Looks simple? Yes, the task is simple:

- Run Text Classification on Title and Description of the product.

- Run Image Classification on Images and output of Text Classification.

- There are many more steps, such as changing background, enrichment, metadata conversion to other marketplaces such as Amazon, etc. but for ease let’s assume there are just 2 steps Text and Image Classification.

The challenges come when we combine this data processing pipeline with a business use case:

- Priority-based processing. Enterprise clients are at the highest priority, then clients using the free tier, after that data for internal evaluation, demo, and training purposes.

- Cost optimization, some days the number of products to process would be up to 500,000 whereas on some days it will be less than 1,000. A scalable system’s cost should be directly proportional to the number of products processed at a given date.

- Limited resource usage. The deep learning classifiers require heavy computing and cannot scale infinitely. So optimized and full usage of these resources based on priority.

Before we move ahead, let’s see the GIF of the architecture’s backbone, which we will be covering in this article. This is for the 2 processes (Text and Image Classification) in the data processing pipeline.

The below diagram shows 2 iterations, one is for scaling when processing 10 products are there, and the other when 100,000 products are there.

In this architecture we have used:

- MongoDB as the database (production database used by all the services)

- RabbitMQ as the message broker

- Google Kubernetes with KEDA for RabbitMQ consumer scaling

- Google Cloud Run for heavy compute classifiers and auto-scaling

The diagram can be divided into 4 major components. Let’s discuss each component in detail.

MongoDB

A no-SQL shared database is used, to handle easy scalability for a huge number of read and write operations. As you can observe the database here is just like a plugin, if read and write need to happen to separate or multiple databases, that can be easily plugged in with Push and Pull services which also is deployed on Cloud Run.

RabbitMQ

An open-source message broker. There are other message brokers as well such as Kafka, or cloud-bound message brokers such as pub-sub, etc. But the reason to choose rabbitmq:

- Supports priority queue by design, which is one of the important requirements for this pipeline. For other message brokers, priority can be supported with bucketing, meaning maintaining 3 queues for the same process with different levels of priority and the priority requirements will be handled by consumers.

- It is a push-based system, which means no race conditions with consumers. It assigns the messages based on priority to all the connected consumers in a round-robin manner and waits till it gets acknowledgment for successful processing of the messages before popping the message out of the queue.

- Broadcasting or notification is not a requirement here for which Kafka or pub-sub would have been the better choice.

Other message brokers could also be used. The choice of message broker depends on the use case.

Google Kubernetes with KEDA

Google Kubernetes cluster is used here. Here, we have used an autopilot cluster, which means that there is no need to assign nodes to a cluster for autoscaling. The resources scaling is managed by the cluster itself that will create and remove new pods.

We have used KEDA (Kubernetes Event-Driven Scaling). The reason to do this is rabbitmq. If scaling needs to happen on memory/CPU usage or HTTP hits, then scaling is easy. But here scaling needs to happen based on the number of messages in the queue. Also, rabbitmq will not send an invocation, it will send messages to already connected consumers. To tackle this challenge we used KEDA, which will keep track of the number of messages in the queue and accordingly bring up new pods (rabbitmq consumers) in the Google Autopilot Clusters.

Google Cloud Run

Cloud run is used here to handle all the heavy computing such as classifiers. This scales down to 0 and scales based on HTTP invocations. In any pipeline, the major cost incurs from hiring the servers for heavy compute processing and keeping it up even while there is no usage for it. With Cloud Run, you can deploy your heavy compute services as a containerized solution, but only pay when you are using it. There are zero amount of operations involved once you deploy it. Here, we can use an Amazon Elastic Container Service (ECS) which is comparable to Cloud Run or if the GPU requirement is there, we can build our service on a GPU node. Basically, it is dependent on the service compute requirements. We use all three GCR, ECS, and GPU nodes based on requirements.

I would also like to mention that Cloud Run is probably the best cloud solution among all the cloud scaling solutions provided. The primary reason is it has all the functionality and is developer-friendly with the deployment of literally 2 lines of code.

Also, the limitations of this kind of design are on scaling the heavy computing resources.

Why build this kind of architecture?

- It is an event-driven system, therefore scaling and cost are incurred when there is an event, and the event is the availability of new data to be processed, update of data, or request for re-processing of data.

- All components are like a plugin. We can change the database, or use multiple databases. We can change the message broker if the requirement asks. We can change Google Cloud Run or use multiple scaling services. All that the design consumes is an HTTP URL for heavy compute services.

- Adding a new process is super easy in the pipeline. There is no need to spend time on any other part of the system while adding a new process. The work required would be adding consumers as consumers might be process specific.

- The same pipeline/system can be used by other services for a completely different set of tasks. That means if we set up this pipeline once, this can be used across the board for different data processing requirements.

- Once deployed, there are literally zero operations involved and minimum development required.

This architecture represents the backbone and the idea of having event-driven scaling. The actual systems are much more complex than this.

Let me know your thoughts on this.

Importance of Product Taxonomy: Role of AI in Automating & Improving Taxonomies