Of Nature, Black Magic, AI and Hacking

Confusing title? It was intentional. There is a parallel between black magic and hacking which becomes clear in AI.

We as a species are in a race to develop AI to an extent where all the difficult laborious work is handled by machines. We have automated most of the factory floor. We have automated the way we handle finances. We have automated the driving of cars and trucks, and are in the process of automating last-mile logistics like the delivery of goods.

Automation is good, it makes processes more economical, efficient and easier to scale. When we look at the timelines, within 300 years of the industrial revolution, we went from having horse drawn carriages to electric vehicles. In the 1700's the job description of “taxi driver” never existed. Soon, it may never exist again.

Automation is not the worry. Autonomous is the worry.

We are creating systems that claim to be “autonomous” in decision making. We have intelligent systems handling our finances, news feeds, we even have consumer robots behaving like humans and influencing us. We have micro drones helping agriculture replacing bees, nano bots working like microbes and drones to fight our wars. However these autonomous systems are NOT based on simple logic unlike simple robotic automation. These systems have fussy logic in them and their decisions are always in probabilities.

Machines can be hacked.

Herein lies the problem. Let me define hacking from a hacker’s perspective. Hacking is essentially making the system behave the way YOU want instead of its intended purpose. It need not be for a negative purpose but a machine can be hacked. But, if it is driven by a predefined logic, we can immediately catch a system that’s doing something its not supposed to. However, the new age systems are autonomous and are essentially our attempt to mimic a brain capable of making decisions. Biologically, the brain is the only organ making decisions. In fact, we have even called the base technology, neural networks. All autonomous systems have an AI engine, and are driven at their core by model files generated by yet another system.

The true nature of these AI engines built by us can only be “predicted” but never definitively known. The output of all engines is a probability score based on the training data given to the model file. So, hacking an AI system would mean changing the behavior / decision making logic of the system by (in some cases) trivially just replacing the model file. In other cases where the system is an unsupervised self-learning system, a stream of wrong data can be fed to change behavior instantly (Microsoft’s Tay is a classic example)

Nature does not allow this.

We cannot change a part of the brain in an animal and make it behave the way we want instantly. The brain is designed to be complex, to ensure stability. We cannot make a deer suddenly eat a rabbit. Nor can we make a perfectly “sane” man drive his car into a building.

But in history, we hear of people with powers accomplish this with animals and human beings. It was termed black magic, and these people were tagged as witches/wizards and generally kept away from the society.

Hacking and black magic can be equated, they are parallel in their intent in the case of AI and nature respectively. Hacking is an attempt to alter a machine brain while black magic attempts to alter the biological brain. The difference is, black magic is a myth… but hacking is very real.

The AI systems that we are building need to have checks and balances just like in nature. An inherent measure to make sure the brain operates within a confined capacity. The human brain is allowed to be creative but any brain (any human) that behaves out of the ordinary is quickly identified.

AI systems need to be controlled by design

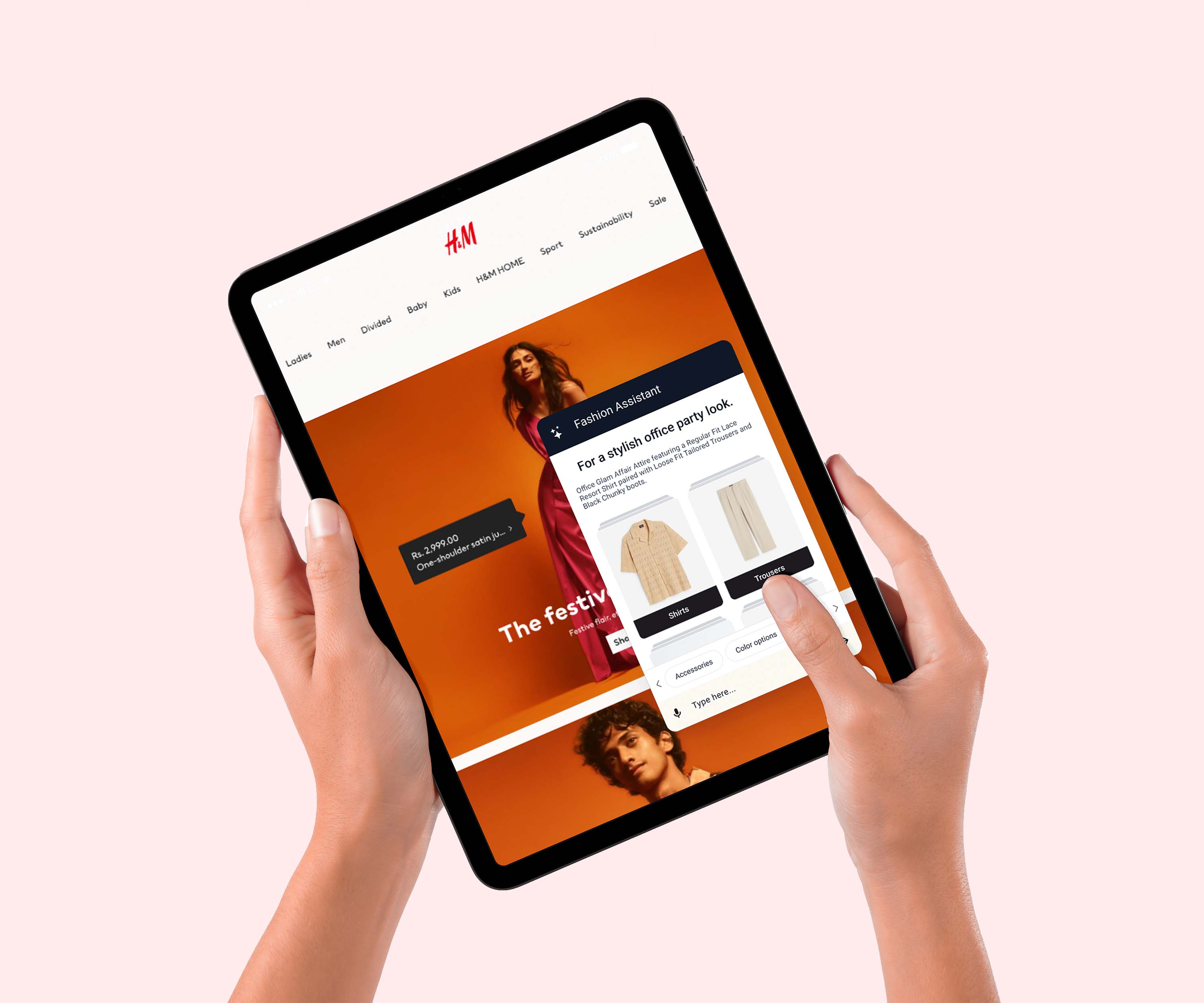

We are building fashion AI at Streamoid. The consequences of having a bad AI system in this domain are far less dangerous than a self-driving vehicle. Even so, we have implemented expert systems on top of our AI to ensure the output never gives unintended results. The system is allowed to be creative within a confined space. But even then, this type of implementation simply allows us to identify a badly behaving AI model (machine brain) and quickly fix it.

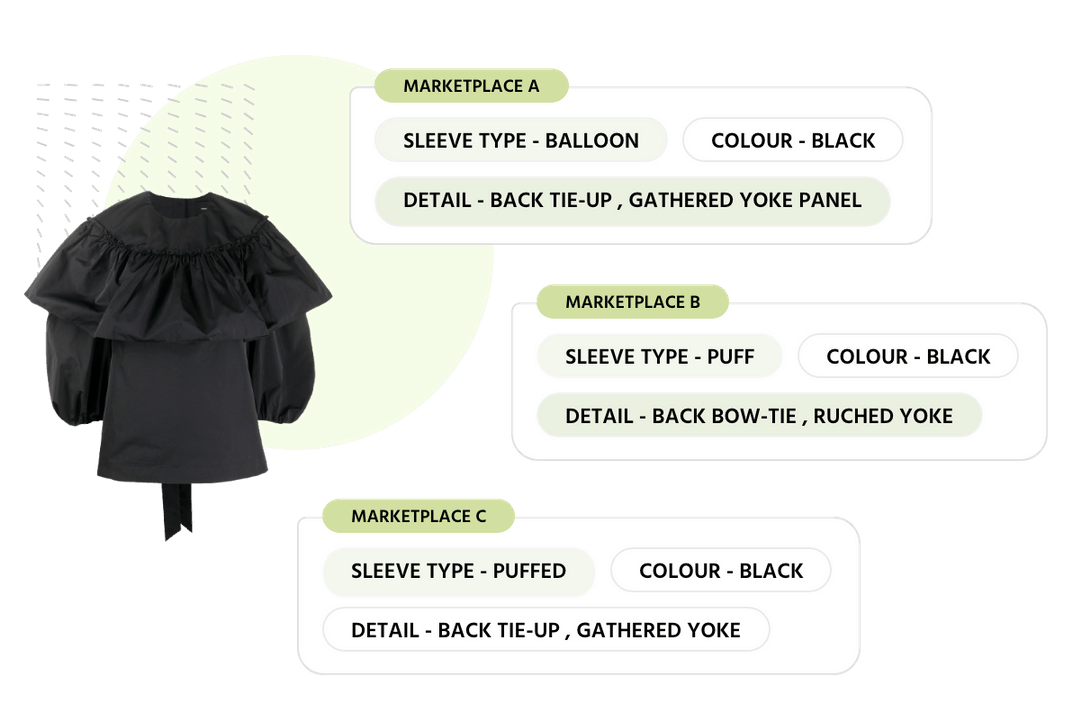

Importance of Product Taxonomy: Role of AI in Automating & Improving Taxonomies